The Helicone Blog

Thoughts about the future of AI - from the team helping to build it.

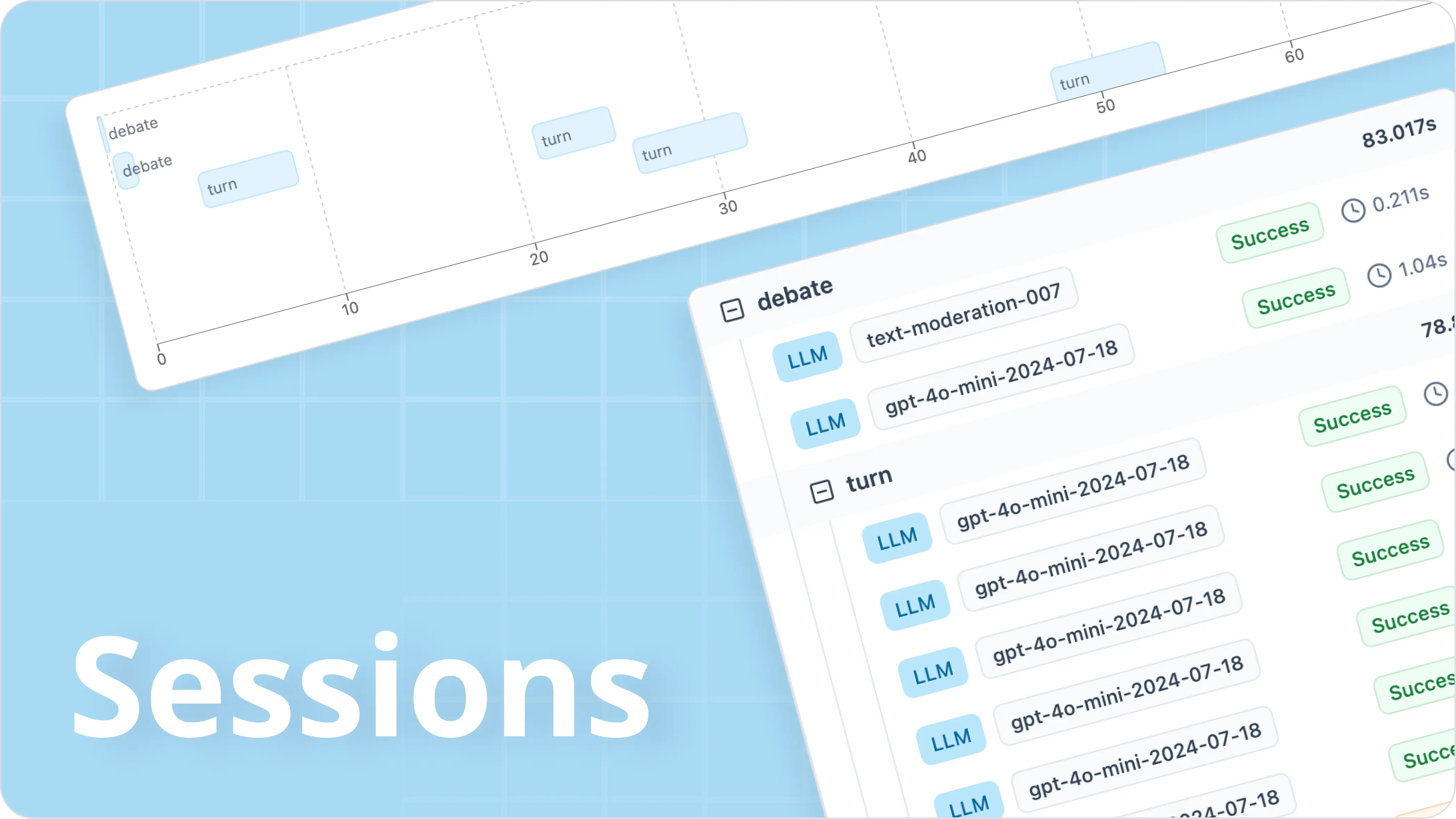

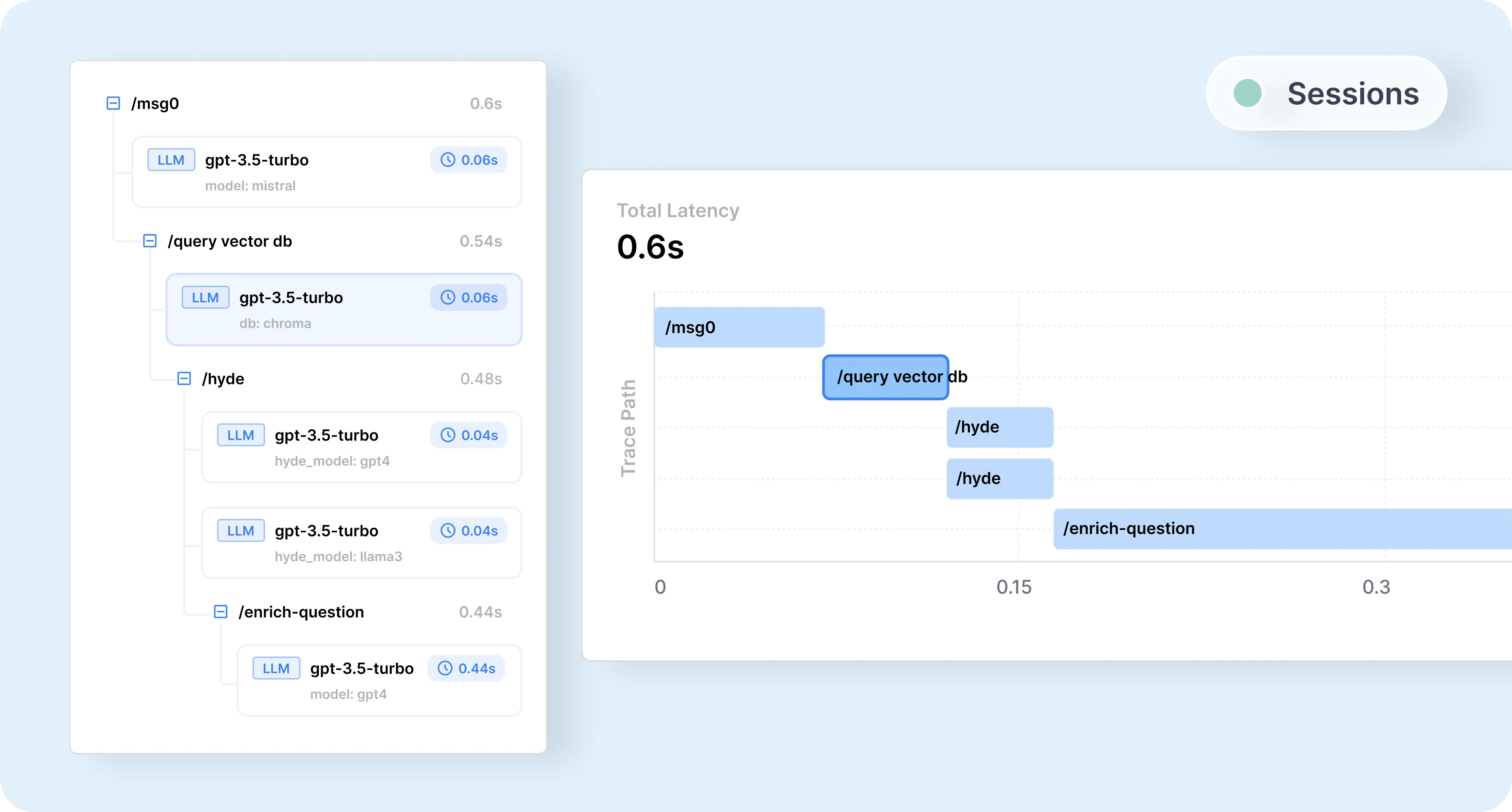

Debugging RAG Chatbots and AI Agents with Sessions

Have you ever wondered at which stage in the multi-step process does your AI model start hallucinating? Perhaps you've noticed consistent issues with a specific part of your AI agent workflow?

Lina Lam

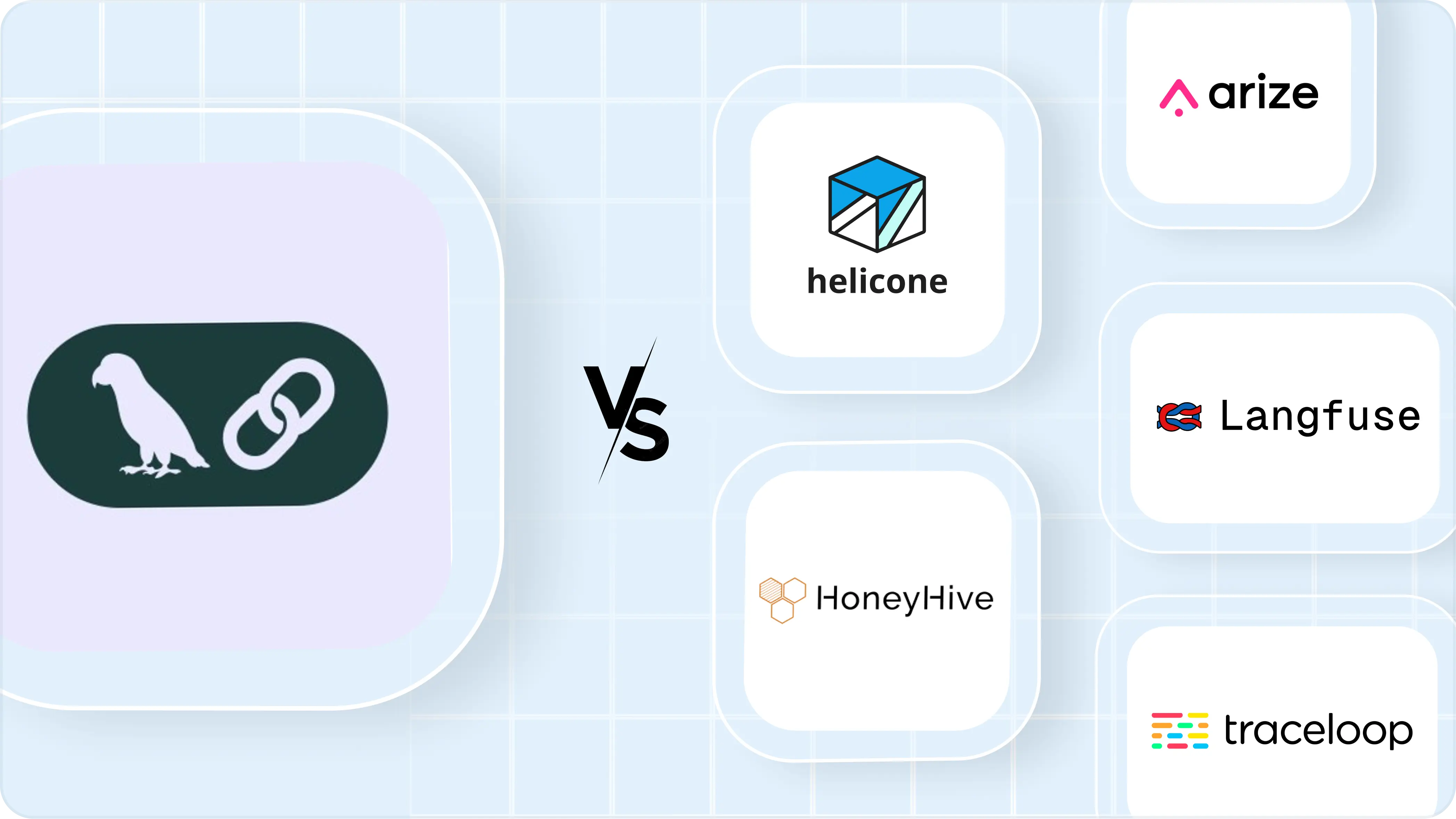

Braintrust Alternative? Braintrust vs Helicone

Compare Helicone and Braintrust for LLM observability and evaluation in 2024. Explore features like analytics, prompt management, scalability, and integration options. Discover which tool best suits your needs for monitoring, analyzing, and optimizing AI model performance.

Cole Gottdank

Optimizing AI Agents: How Replaying LLM Sessions Enhances Performance

Learn how to optimize your AI agents by replaying LLM sessions using Helicone. Enhance performance, uncover hidden issues, and accelerate AI agent development with this comprehensive guide.

Cole Gottdank

What We've Shipped in the Past 6 Months

Join us as we reflect on the past 6 months at Helicone, showcasing new features like Sessions, Prompt Management, Datasets, and more. Learn what's coming next and a heartfelt thank you for being part of our journey.

Cole Gottdank

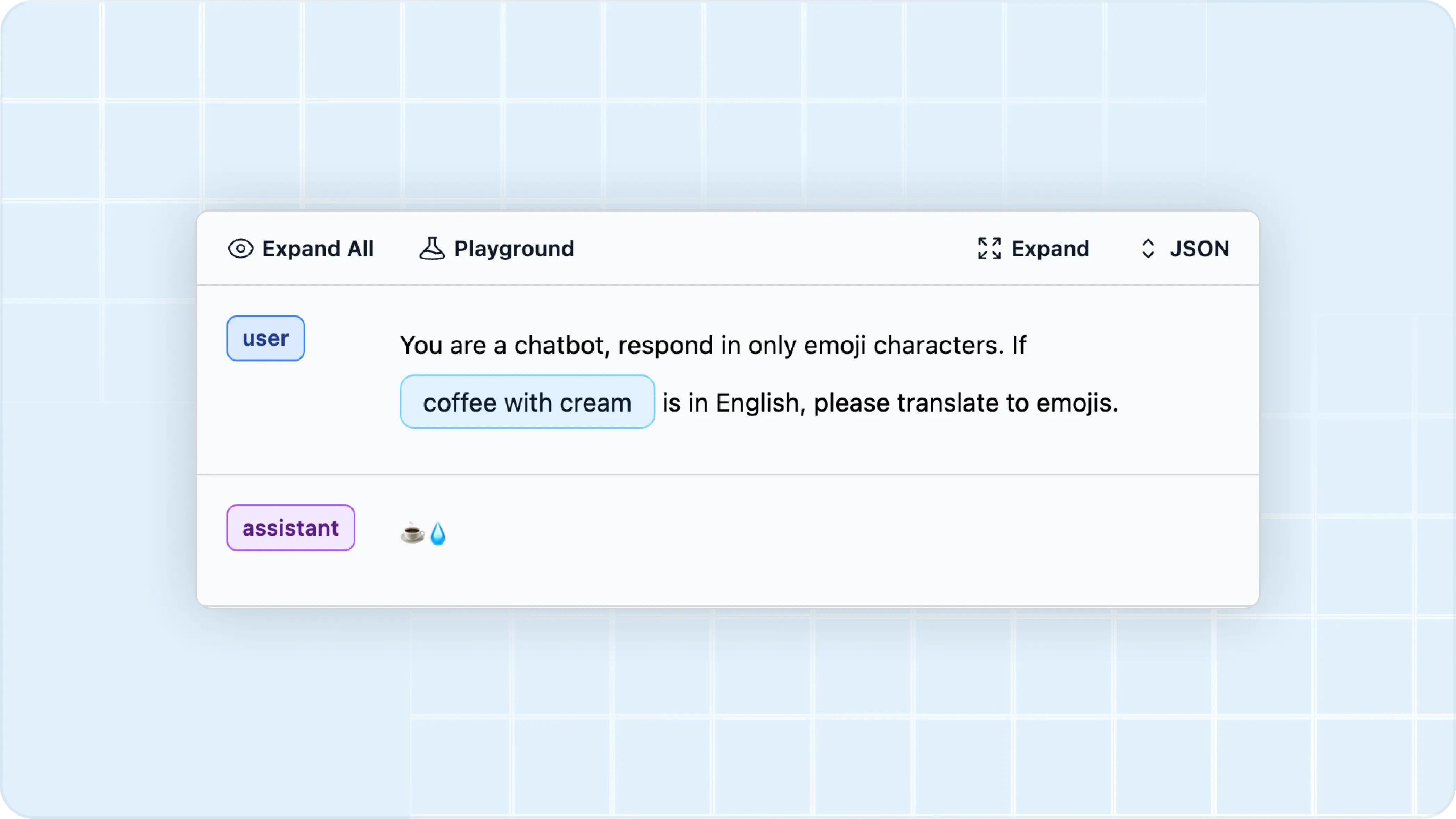

Prompt Engineering: Tools, Best Practices, and Techniques

Writing effective prompts is a crucial skill for developers working with large language models (LLMs). Here are the essentials of prompt engineering and the best tools to optimize your prompts.

Lina Lam

Five questions to determine if LangChain fits your project

Explore five crucial questions to determine if LangChain is the right choice for your LLM project. Learn from QA Wolf's experience in choosing between LangChain and a custom framework for complex LLM integrations.

Cole Gottdank

6 Awesome Platforms & Frameworks for Building AI Agents (Open-Source & More)

Today, we are covering 6 of our favorite platforms for building AI agents — whether you need complex multi-agent systems or a simple no-code solution.

Lina Lam

Portkey Alternatives? Portkey vs Helicone

Compare Helicone and Portkey for LLM observability in 2024. Explore features like analytics, prompt management, caching, and integration options. Discover which tool best suits your needs for monitoring, analyzing, and optimizing AI model performance.

Cole Gottdank

5 Powerful Techniques to Slash Your LLM Costs by Up to 90%

Building AI apps doesn't have to break the bank. We have 5 tips to cut your LLM costs by up to 90% while maintaining top-notch performance—because we also hate hidden expenses.

Lina Lam

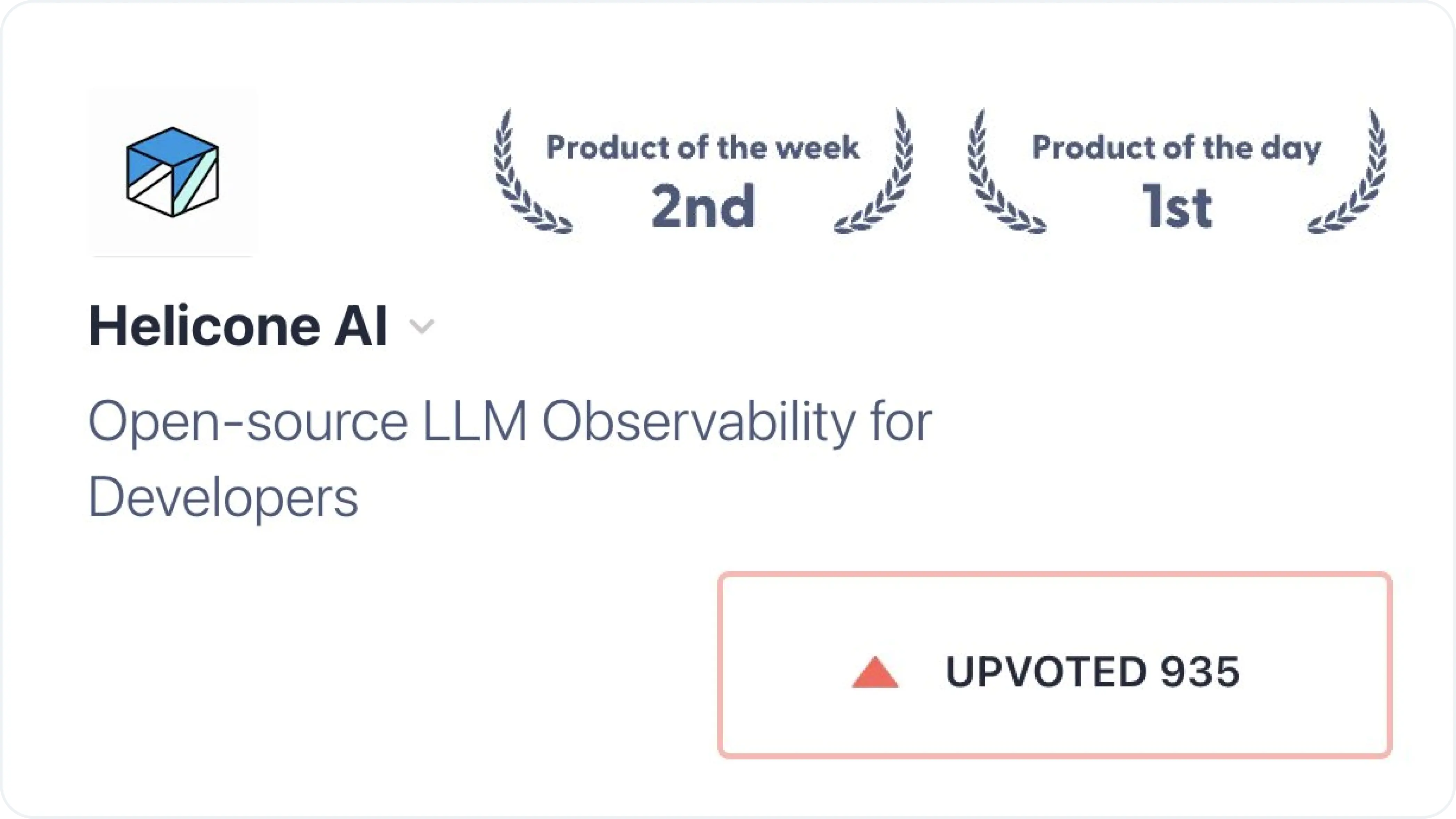

Behind 900 pushups, lessons learned from being #1 Product of the Day

By focusing on creative ways to activate our audience, our team managed to get #1 Product of the Day.

Lina Lam

How to Automate a Product Hunt Launch - Lessons from Helicone's Success

Learn how to become Product of the Day on Product Hunt through automation. Discover key strategies for automating user emails, social media content, and DM campaigns, based on Helicone's experience becoming Product of the Day.

Cole Gottdank

Helicone vs. Arize Phoenix: Which is the Best LLM Observability Platform?

Compare Helicone and Arize Phoenix for LLM observability in 2024. Explore open-source options, self-hosting, cost analysis, and LangChain integration. Discover which tool best suits your needs for monitoring, debugging, and improving AI model performance.

Cole Gottdank

Langfuse Alternatives? Langfuse vs Helicone

Compare Helicone and Langfuse for LLM observability in 2024. Explore features like analytics, prompt management, caching, and self-hosting options. Discover which tool best suits your needs for monitoring, analyzing, and optimizing AI model performance.

Cole Gottdank

4 Essential Helicone Features to Optimize Your AI App's Performance

This guide provides step-by-step instructions for integrating and making the most of Helicone's features - available on all Helicone plans.

Lina Lam

How to redeem promo codes in Helicone

On August 22, Helicone will launch on Product Hunt for the first time! To show our appreciation, we have decided to give away $500 credit to all new Growth user.

Lina Lam

The LLM Stack: A New Paradigm in Tech Architecture

Explore the emerging LLM Stack, designed for building and scaling LLM applications. Learn about its components, including observability, gateways, and experiments, and how it adapts from hobbyist projects to enterprise-scale solutions.

Justin Torre

The Evolution of LLM Architecture: From Simple Chatbot to Complex System

Explore the stages of LLM application development, from a basic chatbot to a sophisticated system with vector databases, gateways, tools, and agents. Learn how LLM architecture evolves to meet scaling challenges and user demands.

Justin Torre

What is Prompt Management?

Iterating your prompts is the #1 way to optimize user interactions with large language models (LLMs). Should you choose Helicone, Pezzo, or Agenta? We will explore the benefits of choosing a prompt management tool and what to look for.

Lina Lam

Meta Releases SAM 2 and What It Means for Developers Building Multi-Modal AI

Meta's release of SAM 2 (Segment Anything Model for videos and images) represents a significant leap in AI capabilities, revolutionizing how developers and tools like Helicone approach multi-modal observability in AI systems.

Lina Lam

What is LLM Observability and Monitoring?

Building with LLMs in production (well) is incredibly difficult. You probably have heard of the word LLM observability'. What is it? How does it differ from traditional observability? What is observed? We have the answers.

Lina Lam

Compare: The Best LangSmith Alternatives & Competitors

Observability tools allow developers to monitor, analyze, and optimize AI model performance, which helps overcome the 'black box' nature of LLMs. But which LangSmith alternative is the best in 2024? We will shed some light.

Lina Lam

Handling Billions of LLM Logs with Upstash Kafka and Cloudflare Workers

We desperately needed a solution to these outages/data loss. Our reliability and scalability are core to our product.

Cole Gottdank

Best Practices for AI Developers: Full Guide (June 2024)

Achieving high performance requires robust observability practices. In this blog, we will explore the key challenges of building with AI and the best practices to help you advance your AI development.

Lina Lam

I built my first AI app and integrated it with Helicone

So, I decided to make my first AI app with Helicone - in the spirit of getting a first-hand exposure to our user's pain points.

Lina Lam

How to Understand Your Users Better and Deliver a Top-Tier Experience with Custom Properties

In today's digital landscape, every interaction, click, and engagement offers valuable insights into your users' preferences. But how do you harness this data to effectively grow your business? We may have the answer.

Lina Lam

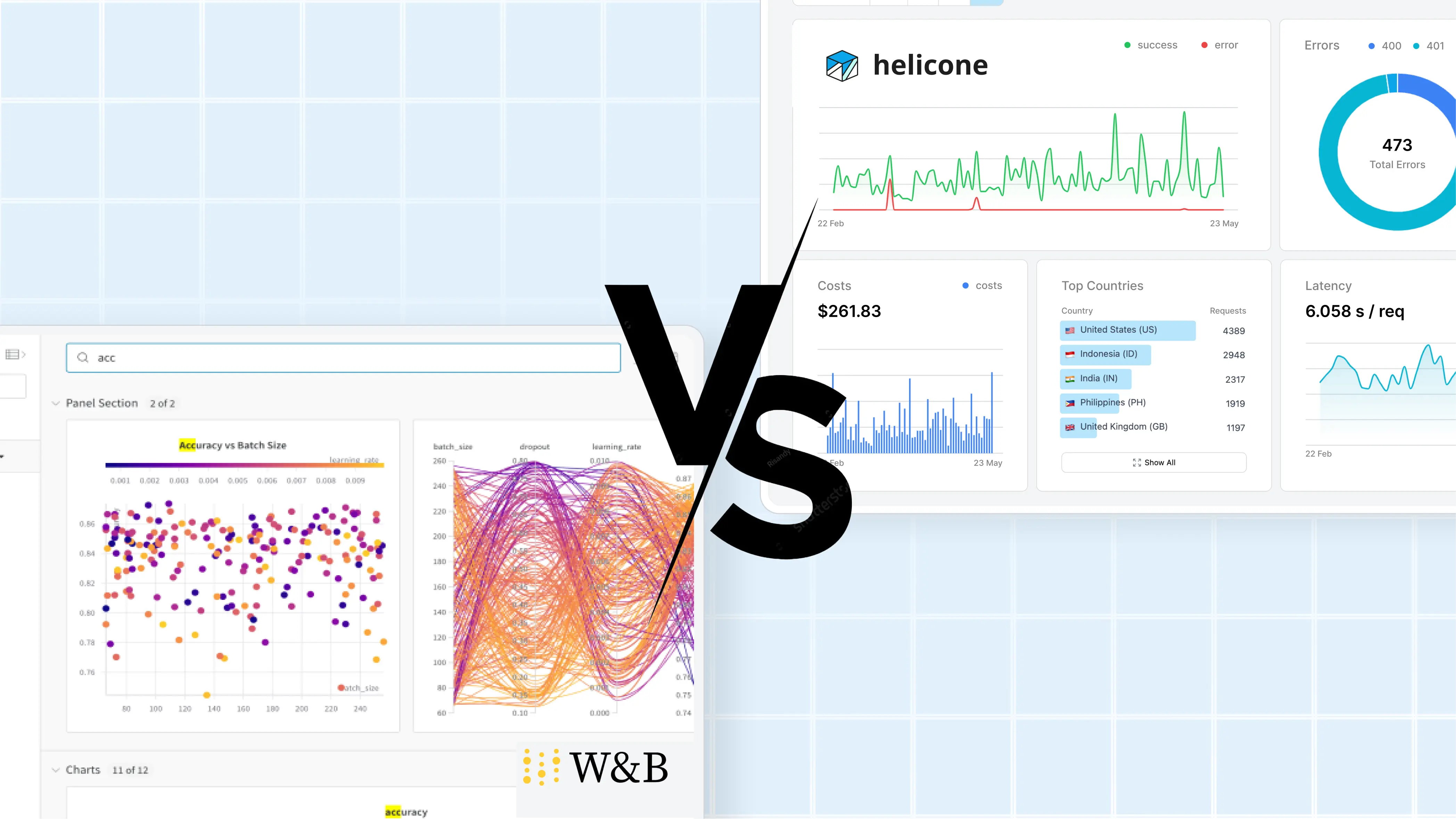

Helicone vs. Weights and Biases

Training modern LLMs is generally less complex than traditional ML models. Here's how to have all the essential tools specifically designed for language model observability without the clutter.

Lina Lam

Insider Scoop: Our Co-founder's Take on GitHub Copilot

No BS, no affiliations, just genuine opinions from Helicone's co-founder.

Cole Gottdank

Lina Lam

Insider Scoop: Our Founding Engineer's Take on PostHog

No BS, no affiliations, just genuine opinions from the founding engineer at Helicone.

Stefan Bokarev

Lina Lam

A step by step guide to switch to gpt-4o safely with Helicone

Learn how to use Helicone's experiments features to regression test, compare and switch models.

Scott Nguyen

An Open-Source Datadog Alternative for LLM Observability

Datadog has long been a favourite among developers for its application monitoring and observability capabilities. But recently, LLM developers have been exploring open-source observability options. Why? We have some answers.

Lina Lam

A LangSmith Alternative that Takes LLM Observability to the Next Level

Both Helicone and LangSmith are capable, powerful DevOps platform used by enterprises and developers building LLM applications. But which is better?

Lina Lam

Why Observability is the Key to Ethical and Safe Artificial Intelligence

As AI continues to shape our world, the need for ethical practices and robust observability has never been greater. Learn how Helicone is rising to the challenge.

Scott Nguyen

Introducing Vault: The Future of Secure and Simplified Provider API Key Management

Helicone's Vault revolutionizes the way businesses handle, distribute, and monitor their provider API keys, with a focus on simplicity, security, and flexibility.

Cole Gottdank

Life after Y Combinator: Three Key Lessons for Startups

From maintaining crucial relationships to keeping a razor-sharp focus, here's how to sustain your momentum after the YC batch ends.

Scott Nguyen

Helicone: The Next Evolution in OpenAI Monitoring and Optimization

Learn how Helicone provides unmatched insights into your OpenAI usage, allowing you to monitor, optimize, and take control like never before.

Scott Nguyen

Helicone partners with AutoGPT

Helicone is excited to announce a partnership with AutoGPT, the leader in agent development.

Justin Torre

Generative AI with Helicone

In the rapidly evolving world of generative AI, companies face the exciting challenge of building innovative solutions while effectively managing costs, result quality, and latency. Enter Helicone, an open-source observability platform specifically designed for these cutting-edge endeavors.

George Bailey

(a16z) Emerging Architectures for LLM Applications

Large language models are a powerful new primitive for building software. But since they are so new—and behave so differently from normal computing resources—it's not always obvious how to use them.

Matt Bornstein

Rajko Radovanovic

(Sequoia) The New Language Model Stack

How companies are bringing AI applications to life

Michelle Fradin

Lauren Reeder